The mining executable does not seem to work properly. I have no insight in what might be wrong. But the Cuda miner seem to abort on line 369?! I see your card has only 6GB memory, you could try to add -E 1 to extra_args in the epoch.yaml and see if the error goes away.

Thank you for your reply, I joined this configuration, but still the same as the previous error. It’s unlucky, I try to reinstall CUDA again.

gpu miner works with docker container?

Yes, it should. Have a look at this guide:

Best,

Vlad

Can we please get a clear answer on this:

How is this

But it is more safe to generate one in the Airgap Wallet. With this method your private keys don’t preside on the rig.

connected to this

---

keys:

dir: keys

peer_password: “secret”

[...]

mining:

beneficiary: “ak_blablabla”

→ Do I only need to ensure the beneficiary (AirGap wallet public key ) is set and can I ignore the keys and secret settings ?

yes you can if you use airgap generated address

Hey I tried this procedure everything worked well.

- I installed all dependencies

- I installed Drivers & Cuda

- I build the node

4.I build the miner - I configured the node

- I launched the node and run miner

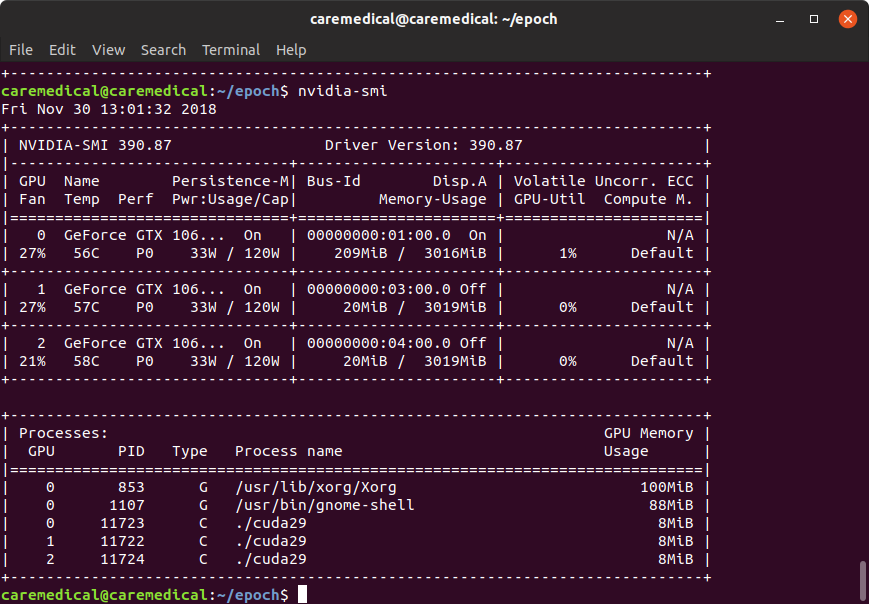

Everything worked perfect…But…the node got synced. But my miner shows wiered when I checked with nvidia-smi. I have been mining since last 10 hours and still the same issue. Here is the screen shot of nvidia-smi output.

I am using 3 1060 3GB GPU’s. You can see the values of usage or not upto. I don’t know what went wrong they are not all working. Even in the log files i get this error

2018-11-30 12:03:05.397 [error] <0.17998.7>@aec_pow_cuckoo:wait_for_result:406 ERROR: GPUassert: out of memory mean.cu 377

2018-11-30 12:03:05.403 [debug] <0.17998.7>@aec_pow_cuckoo:parse_generation_result:479 GeForce GTX 1060 3GB with 3016MB @ 192 bits x 4004MHz

2018-11-30 12:03:05.403 [debug] <0.17998.7>@aec_pow_cuckoo:parse_generation_result:479 Looking for 42-cycle on cuckoo30(“Ek25rhFTSQtK8OBdGjOzAyjfqzdZtWL7O1EGKx58b14=R27HW2B0Pk4=”,0) with 50% edges, 64*64 buckets, 176 trims, and 64 thread blocks.

2018-11-30 12:03:05.446 [error] <0.17999.7>@aec_pow_cuckoo:wait_for_result:406 ERROR: GPUassert: out of memory mean.cu 377

2018-11-30 12:03:05.446 [error] <0.18000.7>@aec_pow_cuckoo:wait_for_result:406 ERROR: GPUassert: out of memory mean.cu 377

2018-11-30 12:03:05.450 [debug] <0.17999.7>@aec_pow_cuckoo:parse_generation_result:479 GeForce GTX 1060 3GB with 3019MB @ 192 bits x 4004MHz

2018-11-30 12:03:05.453 [debug] <0.18000.7>@aec_pow_cuckoo:parse_generation_result:479 GeForce GTX 1060 3GB with 3019MB @ 192 bits x 4004MHz

2018-11-30 12:03:05.453 [debug] <0.17999.7>@aec_pow_cuckoo:parse_generation_result:479 Looking for 42-cycle on cuckoo30(“Ek25rhFTSQtK8OBdGjOzAyjfqzdZtWL7O1EGKx58b14=SG7HW2B0Pk4=”,0) with 50% edges, 6464 buckets, 176 trims, and 64 thread blocks.

2018-11-30 12:03:05.453 [debug] <0.18000.7>@aec_pow_cuckoo:parse_generation_result:479 Looking for 42-cycle on cuckoo30(“Ek25rhFTSQtK8OBdGjOzAyjfqzdZtWL7O1EGKx58b14=SW7HW2B0Pk4=”,0) with 50% edges, 6464 buckets, 176 trims, and 64 thread blocks.

2018-11-30 12:03:05.498 [error] <0.17998.7>@aec_pow_cuckoo:wait_for_result:421 OS process died: {status,2}

2018-11-30 12:03:05.537 [error] <0.18000.7>@aec_pow_cuckoo:wait_for_result:421 OS process died: {status,2}

2018-11-30 12:03:05.576 [error] <0.17999.7>@aec_pow_cuckoo:wait_for_result:421 OS process died: {status,2}

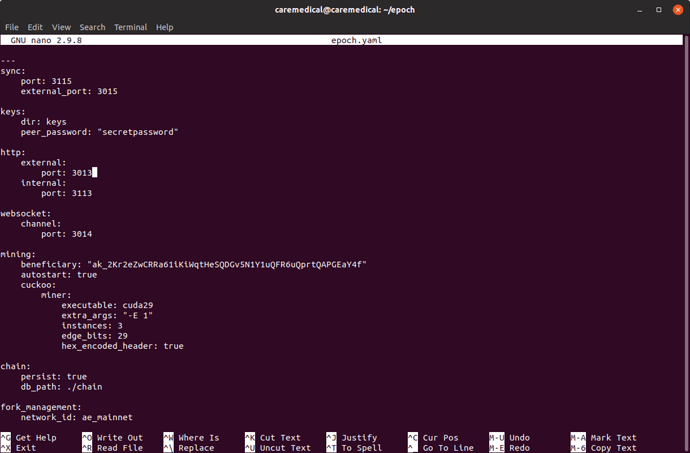

Can someone help me to fix this issue. I followed the epoch.yaml file given by chris and I validated the file too. I dont know what went wrong.

it seems out of memory? Maybe it doesn’t work on 3gb cards. I don’t know. Maybe dev can clarify.

sidenote, its using 7gb on my 1080ti

So, we cannot mine with 3 GB cards. That is ridiculous. I am mining ethereum on them. Why can’t aeternity? May be do I need to change in epoch.yaml by giving so arguments in extra_args

But this dev’s they don’t respond if they find difficulty in answering the issue.

try to add -E 1 to extra_args

I did and restarted the node still same issue extra_args: “-E 1”. But still same issue.

try “-E 3” , maybe that works, it will still require 3.4gb but maybe your lucky

no luck… I think the dev’s should answer this issue… and I know they wont reply.

I think the dev’s should answer this issue… and I know they wont reply.

I am a member of the core team, but not a GPU expert I am afraid… However the efficient algorithm for solving the Cuckoo PoW algorithm (the so called mean miner) does unfortunately require quite a bit of memory. That is unfortunate, but as far as I have understood it something that is hard to improve and still keep the miner efficient.

Then what is the use of mining? How come your network grow. Most of the mining operations run with 4 GB GPU’s. If your algorithm take such huge memory then its not worth mining.

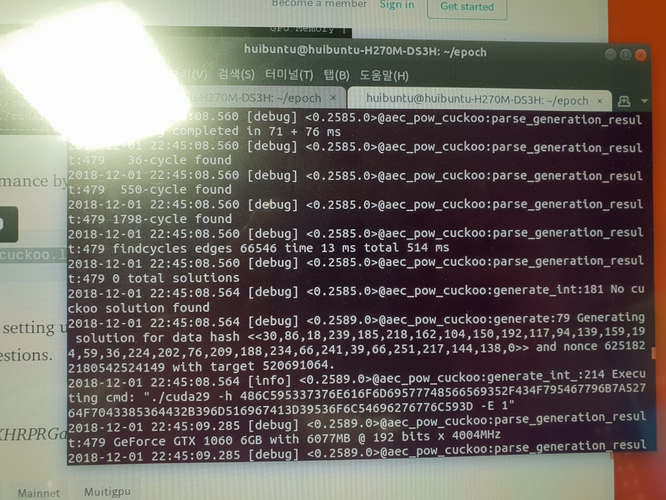

I have a same problem before.

This is my log after I addedd " -E 1 to extra_args

Is it working properly now?

(sorry for my bad screencapture)

yes this looks good!

Thx! I totally followed your intructor.

You are the best.

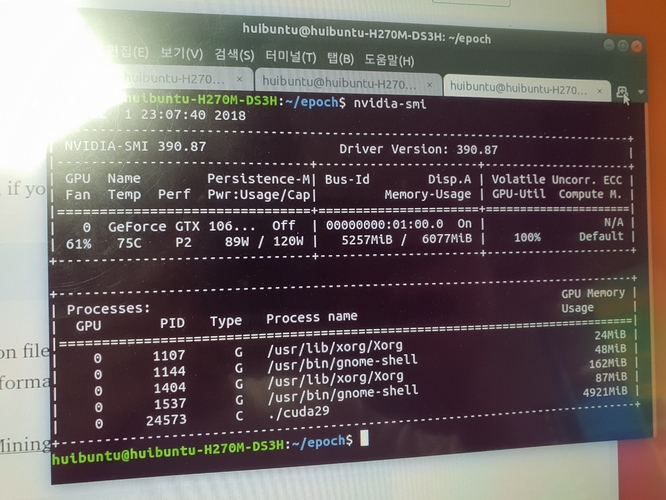

I am trying to mining by following the instruction on Deploy AE Mainnet CUDA MultiGPU Miner. | by Chris | Medium

Everything seems good. But I am not sure if I am mining.

How can I check it?